Gemma 4: Google’s Open-Weight AI for Privacy and Control

Gemma 4: Google’s open-weight AI bringing more control, privacy, and customization

In a landscape increasingly dominated by artificial intelligence tools, Large Language Models (LLMs) are transforming the way we interact with technology. Among them, Gemma 4, a model developed by Google DeepMind, stands out. But what makes it special? How does it work from a technical perspective, and how does it differ from competing models?

Here is a complete guide to Gemma 4.

Part 1: What is Gemma 4 and how does it work?

At its core, Gemma 4 is a family of open-weight LLMs. That means we are not just talking about a chatbot you can use on the web, but about models whose weights can be downloaded, run, adapted, and integrated into your own projects.

This changes the picture significantly compared with models that are accessible only through APIs. With Gemma 4, in fact, you are not forced to rely on an external service for every request: you can choose to run it locally, on your own hardware or even on your personal computer, within your own infrastructure or controlled environments. In addition, you do not necessarily face a per-call cost as happens with many API-based services: of course, hardware, energy, and infrastructure management costs still need to be considered.

Another key point is that Gemma 4 can also run offline, in compatible configurations. This means that, when properly installed locally, it can continue working even without an Internet connection. And it can do so on computers that are accessible to many users: I am using the intermediate version, e4b, on a MacBook with M1 Pro!

This is where privacy comes in. Gemma 4 is not private by definition simply because it is open-weight: privacy always depends on where you run it and how you build the application around the model. But if you choose a local or on-premise deployment, you can avoid sending prompts, documents, and sensitive data to third-party cloud services, keeping information processing within a far more controlled, or even sealed-off, perimeter.

The basic logic: next-token prediction

From a technical perspective, Gemma 4 remains a Large Language Model. It does not know the world the way a person does, but works by recognizing statistical patterns within language.

The fundamental principle behind its operation is next-token prediction. When it receives a prompt, it does not retrieve a ready-made answer from a hidden archive: it analyzes the sequence of words or tokens it has received, evaluates the context, and calculates which tokens are most likely to come next, generating the response step by step.

Why it is different from many other AI tools

The key difference is that Gemma 4 is not just a model to query, but a technological foundation that can be brought into products, processes, and business environments. It can be used as a local assistant, as the engine behind internal chatbots, as support for software development, as a component in document workflows, or as a specialized model through fine-tuning.

This is precisely what makes Gemma 4 interesting from a project perspective as well: not just as a technology to test, but as a component to integrate into real workflows and tools. When artificial intelligence truly enters business processes, the issue is no longer only the quality of the answer, but the ability to build systems that are useful, governable, and aligned with the business. From this perspective, it can also be useful to explore the topic of AI & Automation, meaning the way AI models, workflows, and integrations can become an operational part of the organization.

The process in brief

- Transformer architecture: Gemma 4 is built using the Transformer architecture. This architecture allows the model to assign weight to words within context, understanding relationships, dependencies, and coherence among the different elements of a sentence.

- Large-scale training: the model is pre-trained on vast amounts of data. In this phase it learns linguistic structures, syntactic regularities, widespread knowledge, and writing patterns.

- Instruction tuning and specialized variants: Gemma 4 can be distributed in versions optimized to better follow instructions and operational interactions, as well as be adapted to specific use cases.

- Local or remote generation: when it receives a prompt, the model generates the answer token by token. The difference, compared with many closed services, is that this process can also happen in your local environment if you choose a setup compatible with the resources required by the model.

What this means in practice for companies and professionals

- No mandatory need for continuous connectivity: in compatible local configurations, Gemma 4 can also work offline.

- More control over data: prompts and documents can remain within your IT environment, without being sent to external providers.

- More predictable costs: you do not necessarily depend on a per-API-call price, even though infrastructure costs still need to be considered.

- Greater customization: you can integrate, test, and adapt the model more easily based on your processes and application domain.

This becomes even more interesting when looking at how AI is also changing brand visibility. Today it is not enough just to be present on search engines, but to be correctly understood, selected, and returned by generative models. To explore this shift further, it may also be useful to read how AI is changing brand positioning from SEO to GEO.

Part 2: Why use Gemma 4?

The advantage of Gemma 4 lies not only in its power, but above all in the way it is made available.

After all, the point is not just having access to a powerful model, but understanding what role these systems are starting to play in building visibility, authority, and recommendations. It is the same logic we observe in the AI Observatory, where the behavior of generative models is analyzed to understand how they influence brand presence and perception.

1. Open weights: the major advantage

This is its main strength. While many competitors operate as “black boxes,” where interaction is only possible through APIs without access to internal mechanisms, Gemma 4 is an open-weight model.

- What does it mean? The model’s weights, meaning the numerical values that represent the knowledge learned during training, are made public and downloadable.

- Why does it matter? Researchers and developers can download the model and run it on their own hardware, achieving a much higher level of transparency and control.

2. Privacy and local control

This is one of the aspects that makes Gemma 4 particularly interesting in a business context. The possibility of running it on-premise, on internal servers, dedicated workstations, or owned devices, changes the way an artificial intelligence system can be designed. It is not just about obtaining useful responses, but about doing so while maintaining much more direct control over the entire data lifecycle.

Because Gemma 4 can be run on-premise, on your own servers or devices, companies can manage sensitive data in a completely isolated environment. There is no need to send private data to third-party cloud services, with clear benefits in terms of privacy protection and data governance.

3. Extreme customization

This is where Gemma 4 shows one of its most relevant advantages from an application perspective. A general-purpose model can be very useful for common tasks, but when it comes into contact with specialized processes, technical terminology, or complex business contexts, its limits quickly emerge. The difference between an assistant that “answers well” and one that is truly useful often lies entirely in its ability to adapt to a specific domain.

If you need to build a system capable of speaking the language of maritime law, genetic medicine, industrial mechanics, compliance, or technical customer care, you can take Gemma 4 and subject it to fine-tuning on a proprietary dataset. In this way, the model no longer works solely from its generalist base, but learns terminology, response structures, operational logic, and priorities typical of your sector.

The result is not simply a model that is “more informed.” It is a model that can become more relevant, more consistent, and more aligned with the real context in which it will operate. This means answers that are more aligned with the correct terminology, greater precision in explanations, better understanding of requests, and behavior closer to the expectations of those who use it every day.

There is also another decisive aspect: the ability to customize the model using proprietary data. Internal manuals, operating procedures, company FAQs, technical documentation, resolved tickets, reports, sector glossaries, and document archives can become the foundation for creating an assistant that reflects the organization’s knowledge base. This makes it possible to turn an LLM into an asset that is much closer to the real way the company thinks, works, and communicates.

In short, the real strength of customization is not only making the model more knowledgeable. It is making it more useful. Closer to the sector, more consistent with processes, more aligned with the organization’s language, and therefore more capable of generating real value in day-to-day work.

Part 3: How is an open model trained?

When talking about customizing an open model such as Gemma 4, the right process is fine-tuning, meaning targeted refinement on a specific domain. At this stage, the decisive factor is not only computational power, but above all the quality of the dataset used.

To transform a general-purpose model into an expert assistant for a specific sector, such as compliance, tax law, medicine, or technical customer care, a structured approach is required, divided into several phases.

Phase 1: collecting the corpus

A model’s knowledge does not come from some kind of “magical training,” but from the data it is given. If you want Gemma 4 to become competent in a specific field, you need to build a corpus of content that truly represents the structure of knowledge and the language of that sector.

What to collect for the training dataset

- Reference documents: operating manuals, regulations, laws, company procedures, reports, protocols, and technical documentation.

- Question-and-answer examples: these are among the most valuable types of data, because they show the model how to respond correctly to specific requests.

- Transcripts of real interactions: emails, tickets, support chats, or call transcripts also help transfer the brand’s tone of voice to the model.

- Specific terminology: glossaries, definitions, relationships between technical terms, and industry-specific vocabulary.

Quality beats quantity

It is better to have a few hundred or a few thousand very well-written, expert-verified, and consistent examples than large volumes of disorganized, redundant, or weakly relevant content. In a fine-tuning project, dataset quality directly affects the reliability of the final result.

Phase 2: structuring and cleaning the data

Raw data cannot be used immediately. It must be cleaned, normalized, and presented in a format that simulates a real request or a clear instruction-response structure.

Data cleaning

- Noise removal: eliminating duplicates, unnecessary headers, footers, superfluous URLs, and irrelevant text.

- Normalization: standardizing terminology to avoid inconsistent variants of the same concept.

Prompt formatting

An LLM is not refined simply by uploading documents in bulk. It needs structured interaction examples. For this reason, the dataset is often organized as a sequence of instructions, prompts, and expected answers.

[Instruction]: You are an expert in commercial law.

[Prompt]: What are the requirements of a mandate agreement?

[Expected answer]: A mandate agreement requires the appointment of the agent, acceptance by the principal, and a clear definition of the operating conditions.Phase 3: technical fine-tuning

This is the most technical phase. In general, the entire model is not retrained from scratch, because that would be extremely costly. Instead, Parameter-Efficient Fine-Tuning techniques such as LoRA or QLoRA are used, allowing intervention only on a limited subset of parameters.

What LoRA is

LoRA makes it possible to adapt a pre-trained model by modifying only a small fraction of its parameters. In this way, the model’s general knowledge, such as grammar and language, is preserved while new, domain-specific skills are added.

What happens in practice

Gemma 4 is loaded together with the prepared dataset. The system learns to respond better to prompts related to the target sector by updating only the necessary components. This makes the process more sustainable from an economic perspective and more realistic for companies, research labs, and development teams.

Required resources

Fine-tuning generally requires high-end GPUs or dedicated cloud infrastructure. The amount of resources needed depends on the size of the model, the amount of data, and the level of project optimization.

Phase 4: testing, review, and deployment

The work does not end when training is complete. A fine-tuned model must be thoroughly tested, validated by qualified people, and then integrated into the final application.

- Testing: the model must be tested with scenarios not present in the dataset, in order to assess its ability to generalize.

- Human validation: a domain expert must review the answers, identify possible errors or hallucinations, and correct the data where necessary.

- Deployment: once performance is stable, the model is integrated into the chatbot, internal assistant, or API that will make it operational.

Operational summary

Essential checklist for training an open model

| Objective | Key action | Final output |

|---|---|---|

| Knowledge | Collect documents, procedures, and question-answer pairs. | A structured and clean dataset. |

| Behavior | Define tone of voice, style, and response patterns. | Consistent conversation examples. |

| Training | Apply techniques such as LoRA or QLoRA to the base model. | A model fine-tuned on the domain. |

| Control | Test complex scenarios and correct errors or hallucinations. | A more reliable assistant ready to use. |

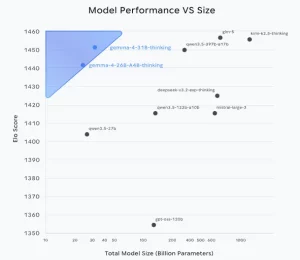

Part 4: Gemma vs other chatbot LLMs

When comparing models such as Gemma 4 with closed systems such as GPT-5 or Claude, the crucial difference is not only the quality of the answer, but above all the development philosophy, accessibility, and level of control left to the user.

Comparison between open-weight models and proprietary models

| Feature | Open-weight models (e.g. Gemma 4) | Proprietary and closed models (e.g. GPT-5, Claude) |

|---|---|---|

| Accessibility | High. The weights can be downloaded. | Limited. Access is only via API. |

| Transparency | Maximum. Researchers can inspect and modify the model. | Minimal. The internal functioning is not accessible. |

| Privacy and deployment | Excellent. The model can be run locally. | Cloud-dependent. Requires sending data to external services. |

| Customization | Deep. Enables fine-tuning on private data. | Limited. Context can be added, but the base model cannot be changed. |

| Control | Total. The user decides where and how to run the model. | Reduced. The user depends on the provider’s policies. |

In short: who should use what?

- Choose Gemma 4 if: you are a developer, researcher, or company handling sensitive data and need total control over the model. If privacy, local hosting, and customization are strategic priorities, Gemma 4 is a very strong choice.

- Choose proprietary models if: you are looking for maximum ease of use, have no particular constraints around privacy or local hosting, and want immediate results with minimal operational effort.

Conclusion

Gemma 4 is not trying to be simply another powerful chatbot. Rather, it represents a statement of intent: artificial intelligence can be more transparent, more customizable, and more accessible.

For this reason, the issue is not only about technology in the strict sense, but also about the way organizations choose to adopt it, govern it, and integrate it into everyday activities. It is no coincidence that the debate on artificial intelligence is increasingly intertwined with processes, roles, skills, and the transformation of work. On this front, another useful insight is the one dedicated to new AI opportunities and challenges in the workplace.

By offering a high-quality open-weight model, Google gives developers and companies stronger, safer foundations that are more firmly under their control.

FAQ about Gemma 4

What is Gemma 4?

Gemma 4 is a family of open-weight language models. In practice, it is not just a tool to use online, but a technological foundation that can be downloaded, run, and integrated into your own projects.

What does it mean that Gemma 4 is an open-weight model?

It means that the model’s weights, that is, the parameters learned during training, are made available. This allows developers, researchers, and companies to run the model on their own infrastructure, study it, adapt it, and use it with a much higher level of control than models accessible only via API.

Is Gemma 4 free?

Gemma 4 can be downloaded free of charge. It does not necessarily follow the per-call pricing logic typical of AI services based on APIs. The model can be downloaded and used, but that does not mean using it is cost-free: hardware, energy, setup, maintenance, and infrastructure still need to be considered.

Can Gemma 4 work without an Internet connection?

Yes. In compatible configurations, Gemma 4 can also be run locally and continue working without a continuous Internet connection. This is one of the aspects that makes it interesting for controlled environments or use cases requiring greater operational autonomy.

Does Gemma 4 automatically guarantee privacy?

No. Privacy does not depend only on the model, but above all on where it is run and how the application using it is designed. If Gemma 4 runs locally or on-premise, however, prompts, documents, and sensitive data can remain within your own technological perimeter, with much more direct control.

What is the difference between Gemma 4 and a proprietary model such as GPT or Claude?

The main difference concerns the level of access and control. With a proprietary model, you generally interact through APIs and have no visibility into the internal functioning. With an open-weight model such as Gemma 4, instead, you can download the model, run it in your own environment, and adapt it more deeply to your needs.

How does Gemma 4 work technically?

Like other large language models, Gemma 4 is based on next-token prediction. It receives a prompt, analyzes the context, and generates the answer one token at a time, progressively building a coherent text.

Can Gemma 4 be customized for a specific sector?

Yes. One of Gemma 4’s most important advantages is the possibility of customizing it through fine-tuning. This makes it possible to adapt the model to technical language, procedures, proprietary datasets, and specialist use cases, making it much more aligned with a specific domain.

What is needed to train or refine a model such as Gemma 4?

Above all, it requires a high-quality dataset that is well structured and coherent with the domain you want to cover. From a technical perspective, fine-tuning is often carried out using techniques such as LoRA or QLoRA, which make it possible to adapt the model without having to retrain it completely from scratch.

For which companies or professionals does it make sense to evaluate Gemma 4?

Gemma 4 is particularly interesting for companies, IT teams, developers, technical departments, professional firms, and organizations that need more control over data, infrastructure, and customization. It is especially relevant when privacy, local execution, and model specialization become concrete project requirements.

Bibliography and useful sources

Google AI for Developers: Gemma

Official page dedicated to the Gemma model family, with overview, documentation, and technical references useful for understanding its structure, usage, and application scenarios.

Google AI for Developers: Gemma 4 model card

Official Gemma 4 model card, useful for exploring the model’s capabilities, deployment, supported languages, limitations, and usage scenarios in greater depth.

Google DeepMind: Gemma

Institutional Google DeepMind page dedicated to Gemma, useful for framing the project, the positioning of the model family, and the strategic vision behind its development.

Google Blog: Introducing Gemma 4

A Google-published deep dive on the release of Gemma 4, useful for contextualizing goals, use cases, and development scenarios.

Hugging Face: Gemma

Repository and community resources useful for understanding how Gemma models are distributed, integrated, and tested in real development environments.

HT&T Magazine: The evolution of artificial intelligence beyond Turing’s predictions

A useful deep dive to contextualize Transformer architecture and the evolution of language models within the broader AI landscape.

Continua a leggere

13 minutes of reading

And it consumes less energy.

To return to the page you were visiting, simply click or scroll.