Anthropic and AI in the workplace: young people at risk

AI, Work & Organization

Anthropic, AI and work: the real risk is not mass unemployment, but the collapse of junior work

Anthropic’s new study on AI in the workplace says that AI is not yet causing unemployment to surge. But stopping there would be a mistake: the most important signal concerns hiring, training, and access to professions. And it is a far more structural signal than it may seem.

We had already reflected on this topic in our in-depth article on

how artificial intelligence is changing the future of work

, but Anthropic’s report adds a more specific element: the possible impact on junior work and on the development of skills.

What Anthropic’s report measures about AI and work

When it comes to artificial intelligence and the labor market, public debate still tends to swing between two opposite extremes. On the one hand, there is the apocalyptic narrative: millions of jobs wiped out, professions made obsolete, offices emptied out. On the other, there is the reassuring reflex: AI helps, speeds things up, suggests, but in the end does not really change employment balances.

Anthropic’s report Labor market impacts of AI: A new measure and early evidence is interesting precisely because it tries to move beyond this polarization. Its value, however, lies not only in the answer it offers, but in the question it forces us to ask: is AI eliminating jobs, or is it transforming the way people enter work, grow within organizations, and build the skills of the future?

This is the most useful perspective from which to read the report: not only as a snapshot of employment, but as an early indicator of a broader transformation affecting team structures, internal training, seniority composition, and organizational models.

The key point not to miss:

the most underestimated risk is not immediate mass unemployment, but a progressive compression of entry-level work. If junior roles are reduced, the pipeline that forms tomorrow’s senior professionals is weakened.

What Anthropic says

The core of the report is a new metric called observed exposure.

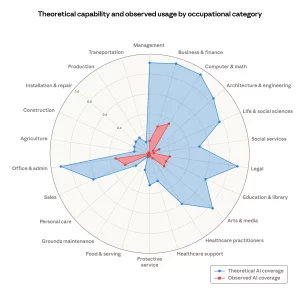

In essence, Anthropic combines two levels of analysis: on the one hand, the theoretical feasibility of a task using large language models; on the other, real-world use observed in work contexts. The goal is to distinguish what AI could do from what it is already doing, in other words its actual adoption in work settings.

This is an important distinction. Over the last two years, many analyses have reasoned mainly in terms of theoretical potential, fueling very drastic interpretations. Anthropic instead tries to introduce a more concrete criterion: it is not enough to know that an activity is technically automatable, you also need to understand whether that automation is actually showing up in professional workflows.

The results are already enough to correct some widespread simplifications. The most exposed occupations do not coincide with manual work or with roles traditionally seen as weaker. Instead, many cognitive, office-based, digital and language-heavy professions emerge: programmers, customer service representatives, data entry workers, medical documentation specialists, market analysts, financial roles and technical support roles.

The point, then, is not qualification in the traditional sense, but how much activities can be described, standardized, and made formalizable. Generative AI enters more easily where there are digital, structured, repetitive, describable and verifiable tasks. And this affects many jobs that for years were assumed to be too complex to be truly touched.

It is no coincidence that this impact is concentrated above all in cognitive, language-based and structured activities. It is a theme we had already touched on in the article How artificial intelligence works with our brain, which shows how AI is already changing the way we learn, work and make decisions.

Another relevant aspect is the gap between technical capability and actual use. In several occupational categories, the potential of the models is far higher than their concrete adoption in companies. This may seem reassuring, but if read carefully it says something else: not that the risk is lower, but that the transformation is still in its absorption phase.

The most important insight

The fact that AI can do more than companies are already using does not reduce the scale of the phenomenon. It suggests, rather, that we are still in its early stage. First, processes change; then hiring policies; then team architecture. Only afterward does the change become mature enough to appear clearly in macro-level numbers.

Which workers are currently most exposed to AI?

Anthropic’s report shows that the workers most exposed to artificial intelligence are not manual or lower-skilled workers, but many cognitive and office-based roles. At the top of the ranking are software programmers, with observed coverage of 74.5%, followed by customer service workers at 70.1%, data entry workers at 67.1%, and medical documentation specialists at 66.7%.

At the opposite end, around 30% of workers show zero exposure. This group includes professions such as cooks, mechanics, lifeguards and bartenders: roles in which physical presence, direct interaction with people and environments, immediate adaptability and situational judgment matter. These are activities that, at least for now, cannot easily be absorbed by a language model.

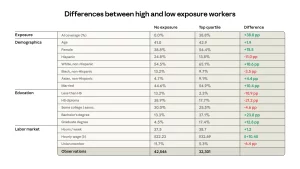

There is another point worth noting. The workers most exposed to AI tend to be more often women, more educated, better paid and on average older. This makes the picture even more interesting, because it challenges one of the most superficial narratives about automation: it is not primarily the profiles traditionally considered most fragile that are at the center of the initial impact, but many professions belonging to structured cognitive work.

This is precisely one of the report’s most relevant aspects: AI is not primarily hitting low-paid or traditionally “routine” work, but rather activities that can be codified, standardized and turned into predictable operational flows. And this makes simplistic readings of AI disruption in the labor market much harder to sustain.

Why AI may hit junior work first

One of the most cited passages of the report concerns the fact that, at least for now, there is no strong evidence of a systematic increase in unemployment among the most exposed occupations. This is a useful point and deserves attention, but it is not the most interesting one.

The most delicate signal, within the group considered by Anthropic, concerns young people between the ages of 22 and 25. In high-exposure occupations, Anthropic observes a decline in entry into new jobs compared with 2022. The authors of the report themselves urge interpretative caution, and rightly so, but the direction of the signal is already significant.

Here lies the report’s most interesting and also most critical reading: AI may not immediately destroy existing jobs, but it may compress access to future jobs. And that is a much less visible, much slower, but also much more structural impact.

If a company can produce the same output with five seniors supported by AI instead of five seniors plus two juniors, it does not necessarily have to lay anyone off today: it can simply decide not to hire tomorrow. The problem is that if that junior never enters, they do not accumulate experience, they do not develop a craft, they do not acquire context, and they do not become the middle or senior professional of the day after tomorrow.

This is where the issue moves beyond labor economics in the strict sense and becomes an organizational and training issue. It does not concern only the number of people employed, but the ability of a system to generate skills over time.

This interpretation is also consistent with other empirical studies on generative AI at work. In several contexts, the main effect does not seem to be immediate substitution, but rather the redistribution of productivity and competence. This can help less experienced workers who are already in place, while at the same time reducing incentives to hire new entry-level profiles.

The limits of the Anthropic report: method, scope and bias

A more critical perspective is needed here. The report is serious, well built, and more useful than a great deal of superficial commentary. But its main metric includes a structural limitation: part of the analysis is based on use cases observed within the Anthropic ecosystem.

This means that observed exposure is not a perfectly neutral measure of the labor market as a whole. It is a measure mediated by a product, a user base, a usage logic, and specific adoption patterns. Put even more directly: the report observes work through Claude’s window.

This is not a reason to dismiss it. On the contrary, its proximity to real usage data is precisely what makes it more interesting than many theoretical estimates. But it should not be turned into a definitive verdict. The real market is already multi-model, multi-tool and multi-process. Companies use ChatGPT, Copilot, Gemini, vertical agents, embedded tools inside work software, APIs, automations and proprietary stacks.

There is also a second, even more substantial limitation. Professions are not simple sums of tasks, but combinations of execution, judgment, responsibility, coordination, context and trust. Automating one portion of work does not automatically mean replacing a role. Task analysis is extremely useful for understanding where AI enters, but much less sufficient for understanding where work truly disappears.

There is finally a third limitation to consider: the report mainly observes the U.S. labor market, which has historically been faster in adopting new technologies. In Italy, where the economic fabric is largely made up of small and medium-sized enterprises, it is plausible that the organizational adoption of AI will follow slower timelines. This does not reduce the report’s relevance, but it does suggest reading its results as an early signal rather than as a picture immediately transferable to the Italian context.

Why high exposure to AI does not automatically mean less work?

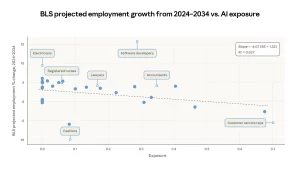

Another important point in the report concerns the relationship between AI exposure and employment growth projections. The finding is interesting, but it should be read with caution. The risk of a mechanical interpretation is very high.

The reason is simple: high exposure does not automatically equate to occupational decline. Some roles may be highly exposed and still continue to grow. In some cases, AI does not eliminate work, but changes its scope, organization and productivity. In other cases, it may even increase overall demand for that kind of function.

This is particularly true for many technical, analytical and digital roles. The most frequent mistake is to confuse exposure with destiny. Measuring exposure is useful; using it as an automatic prophecy is wrong.

How is AI changing internal training?

The most underestimated part of this entire debate concerns the organization of work. Most companies are measuring AI through efficiency KPIs: time saved, tasks completed, content produced, tickets handled, cost reductions. These are understandable metrics, but they are not enough.

If we want to read AI’s impact properly, superficial metrics are not enough. We also discussed this in The great illusion of metrics: ROAS, AI and real profit, where the point is precisely to distinguish between apparent signals and real impact on results.

What many organizations are not yet measuring is the resilience of their own internal learning model. If junior activities are compressed, automated or absorbed by a few seniors supported by AI, how are tomorrow’s professionals trained? Who will gain experience through simple but formative activities? Who will build the operational memory that today is acquired by correcting mistakes, observing seniors, managing repetitive cases and accumulating context?

“If you do not hire a junior today because you have AI, you will not have a senior tomorrow.”

This is the real systemic risk. Not next quarter’s payroll, but the quality of the skills pipeline in 2028, 2029 and 2030. AI does not just modify individual productivity. It reshapes the learning chain inside the company.

That is why the most mature companies should begin asking more advanced questions: which roles are at risk of being hollowed out? Which seniority levels are we no longer cultivating? How is the balance between senior, middle and junior profiles changing? Which activities remain human because they depend on judgment, relationship, responsibility and context?

The most radical visions: Elon Musk and Sam Altman on the future of work

Alongside the data observed by Anthropic, it is worth considering the more radical predictions advanced by some of the key figures in the race for artificial intelligence. They help us understand the cultural and strategic climate in which today’s debate on work is moving.

Elon Musk: toward an economy in which work becomes optional

Elon Musk describes an extremely far-reaching scenario. In his view, the combination of AI, humanoid robotics and abundant energy could lead, within a few years or decades, to a world in which human work is no longer an economic necessity, but a personal choice.

It is a vision of near-total automation, in which the problem would no longer be finding work, but redefining the very meaning of work.

Sam Altman: some jobs will disappear, but the system will reconfigure itself

Sam Altman adopts a less utopian tone, but one that is no less disruptive. For some time he has argued that the shape of jobs will change profoundly, that some people will lose their roles, and that the relationship between capital, labor and social protection will have to be rethought.

At the same time, he insists on the idea that we will not be facing occupational destruction alone, but also a redefinition of work: new tools, new functions and new economic activities will emerge precisely thanks to AI.

The difference between prediction and evidence

The decisive point is this: Musk’s and Altman’s visions should be read as strategic and political forecasts, not as empirical proof of what is already happening. This is precisely where Anthropic’s report becomes useful: it brings the discussion back from the realm of radical declarations to that of observable signals.

In other words, Musk and Altman reason about where AI could take us. Anthropic instead tries to measure where, concretely, AI is already beginning to intervene in the labor market.

Conclusions

Anthropic’s merit is that it has brought the discussion about AI’s impact on work back into a more measurable and less ideological framework. Its limitation is that it measures a transition that is still ongoing, and it measures it through a necessarily partial window. But that is precisely why the report is useful.

The most superficial reading would say: AI is not causing mass unemployment, therefore the alarm is excessive. The more mature reading says something else: the change has already started, but it may show up first in processes and hiring, rather than in layoffs.

The most serious impacts may not appear where everyone is looking. Not in the loudest headlines. Not in the immediate end of human work. But in the silent contraction of entry-level work, in the shrinking number of opportunities to learn, and in the transformation of a profession into a sequence of outputs governed by a few experts assisted by many models.

If this reading is correct, then the most important question is not only how many jobs AI will destroy, but how many professionals it risks preventing from coming into being.

What should companies, HR teams and managers do in the face of AI?

The smartest reaction is neither to deny AI’s impact nor to chase a catastrophic narrative. More mature metrics need to be built. Not only productivity per worker, but also onboarding quality, learning speed, dependency on seniors, the rate of escalation toward humans, the resilience of internal training, and the ability to build competencies that are not based solely on execution.

The issue is not man versus machine, but designing organizations and companies that know how to use AI without emptying out the path through which people are formed.

FAQ

Does Anthropic’s report say that AI is causing mass unemployment?

No. At least for now, the report does not show a systematic increase in unemployment in the most exposed occupations. The most delicate signal instead concerns the slowdown in young people entering some high-exposure jobs.

Which jobs are the most exposed according to Anthropic?

Among the most exposed roles are programmers, customer service representatives, data entry workers, medical documentation specialists, market analysts and financial profiles. More broadly, the activities most affected are cognitive, structured, digital and language-based ones.

What does observed exposure measure?

It is a metric that combines the theoretical possibility that a language model can perform a task with real-world use observed in work contexts. It is meant to distinguish AI’s technical potential from its concrete adoption at work.

Why can the risk for juniors be more important than the unemployment figure?

Because a market that stops hiring entry-level profiles may appear stable in the short term, but in the medium term it weakens the skills pipeline, reduces professional mobility and makes the training of future senior profiles more fragile.

Does high exposure to AI mean a job will disappear?

No. Exposure is not destiny. Some roles will be compressed, others will be transformed, and others may still grow because AI increases their productivity or expands overall demand.

Bibliography

Anthropic Research

Labor market impacts of AI: A new measure and early evidence

PDF Paper

Full version of the paper published by Anthropic.

NBER

Generative AI at Work, Brynjolfsson, Li, Raymond.

BLS

Employment Projections 2024–2034.

Continua a leggere

And it consumes less energy.

To return to the page you were visiting, simply click or scroll.